Description

The Multimedia Timer API should be capable of running at a higher resolution than GetTickCount (which its currently implemented as). Multimedia timer accuracy can be adjusted via timeBeginPeriod and timeEndPeriod, both of which do not affect GetTickCount.

This causes some applications (particularly games) which should run at a fixed framerate to run slowly or too fast.

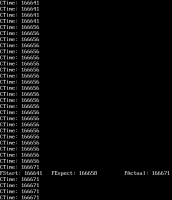

A test case where an application (after setting timeBeginPeriod(1)) was in a spinlock querying timeGetTime for its frame limiting can be seen in SpinlockTiming.png, FStart is when the frame began, FExpect is when the frame was expected to end and FActual is when it actually ended. Lastly CTime is the values reported by timeGetTime.

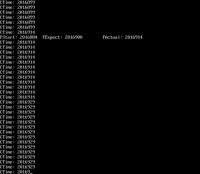

Upon replacing the WinMM DLL in ROS with the WinMM dll from Windows 2003 SP1 the problem resolved itself and the application ran at the intended speed (TimingWinMM2k3.png).

Additionally it would be worth mentioning that it should default to 1ms accuracy when the Compatibility Mode is set to Windows 95 or Windows 98 as per Win32 documentation it behaves differently on 9x than in NT (defaulting to 5ms+, usually 10-16ms accuracy). See https://chat.reactos.org/files/npbrboyzr7g5jmkz3my3dpunge/public?h=gFiwmNSvvUUNLCizw4lWKnR-COLLaRiEww3TbXzUZZo

A poorly written application or game could expect the 1ms accuracy even though it doesn't use the proper timeBeginPeriod(1) to request it. (Developers in the mid 90s believing NT is a specialized workstation operating system few use and thus didn't add the call, not knowing that in several years everyone would be using an NT based operating system)

Attachments

Issue Links

- relates to

-

CORE-12227 [QUARTZ] Media Player Classic 1.7.10 stuttering audio & video playback

-

- Open

-

-

CORE-13963 Media Player Classic Home Cinema MPC-HC 1.7.13 media progress bar does not progress linear but has big time-jumps

-

- Open

-

-

CORE-15332 XEBRA Version 18/10/27 plays a game very slowly

-

- Resolved

-